Every CIO conversation I've had this year eventually gets to the same place: GPU infrastructure. Not theoretically — concretely. Boards are approving eight-figure compute budgets. Procurement teams are evaluating NVIDIA rack configurations. Finance is running depreciation models on hardware that didn't exist two years ago. And if you're selling enterprise software into IT budgets right now, that infrastructure layer is the context in which every deal you're working lives.

The problem is that most enterprise sellers don't know the difference between an H100 and a GB200. They can't place DGX in relation to HGX. They've heard "Blackwell" as a buzzword but couldn't explain what it actually changed. That's a gap — not because you need to be an ML engineer, but because understanding the hardware tier tells you exactly where a company is in its AI journey and what they're about to need next.

This is that map.

This is not a spec sheet. It's a map of where AI compute money is going — and why every enterprise seller should care which tier their customers are on.

The GPU Family Tree

NVIDIA's data center GPU lineup has three active generations you'll encounter in enterprise accounts: Ampere (A100), Hopper (H100/H200), and Blackwell (B100/B200/GB200). Each generation roughly doubles or triples the performance of the last for AI workloads. Here's what you actually need to know about each.

| Memory | 80 GB HBM3 |

| Bandwidth | 3.35 TB/s |

| Key use | LLM training, fine-tuning, inference at scale |

| Interconnect | NVLink 4.0 (900 GB/s) |

| Memory | 141 GB HBM3e |

| Bandwidth | 4.8 TB/s |

| Key use | Larger model inference, memory-bound workloads |

| Position | H100 upgrade path before Blackwell availability |

| Memory | 48 GB GDDR6 |

| Bandwidth | 864 GB/s |

| Key use | Enterprise inference, multimodal AI, video AI |

| Form factor | Standard PCIe — no NVLink required |

The Blackwell Era

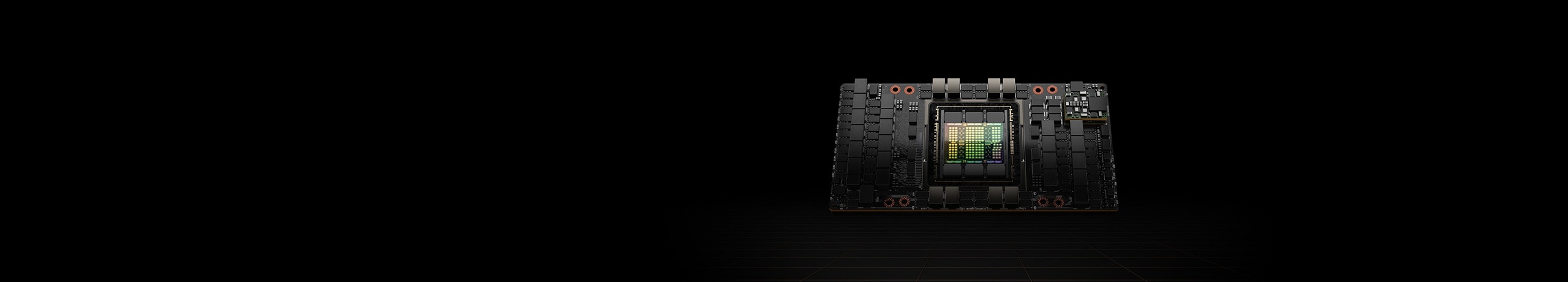

The GB200 and GB300 NVL72 represent something meaningfully different from a GPU upgrade cycle. These aren't single cards you slot into a server — they're rack-scale systems. The NVL72 designation means 72 GPUs connected via NVLink in a single integrated chassis. The compute, networking, and power delivery are co-designed as one unit. You don't buy a GB200 NVL72 GPU; you buy a GB200 NVL72 rack.

The headline number NVIDIA uses is a 30x inference throughput improvement over the H100 for large language model workloads. That number requires unpacking — it's achieved through a combination of faster per-GPU performance, NVLink Switch chips that eliminate inter-node bottlenecks, and the GB200 Superchip architecture that pairs two Blackwell GPUs with a Grace CPU on a single package. The CPU-GPU bandwidth goes from PCIe speeds to 900 GB/s.

What this means practically: a company that needed 100 H100s to serve a frontier model at acceptable latency may need fewer than 10 GB200 NVL72 rack units to do the same job. At NVIDIA's pricing and the economics of GPU depreciation, the math can actually work in favor of the newer hardware for inference-heavy shops. That's why enterprise accounts with serious AI ambition are making GB200/GB300 decisions now, even at significantly higher capital cost.

The GB300 NVL72 follows the same architecture with further memory and bandwidth improvements — 192 GB HBM3e per GPU versus 192 GB in the GB200, plus higher interconnect bandwidth. It's the current top of the stack for accounts ordering today.

The System Stack

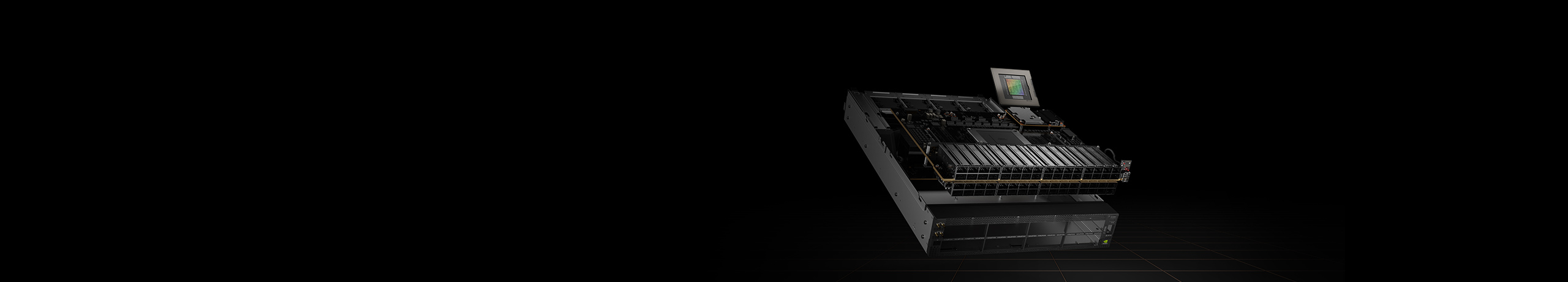

Once you understand the GPU tier, the next question is the system form factor. NVIDIA sells compute at every level of the stack — from a single-socket desktop to a multi-rack supercomputer. Knowing where an account sits tells you a lot about their AI maturity and what they're likely to need from software vendors.

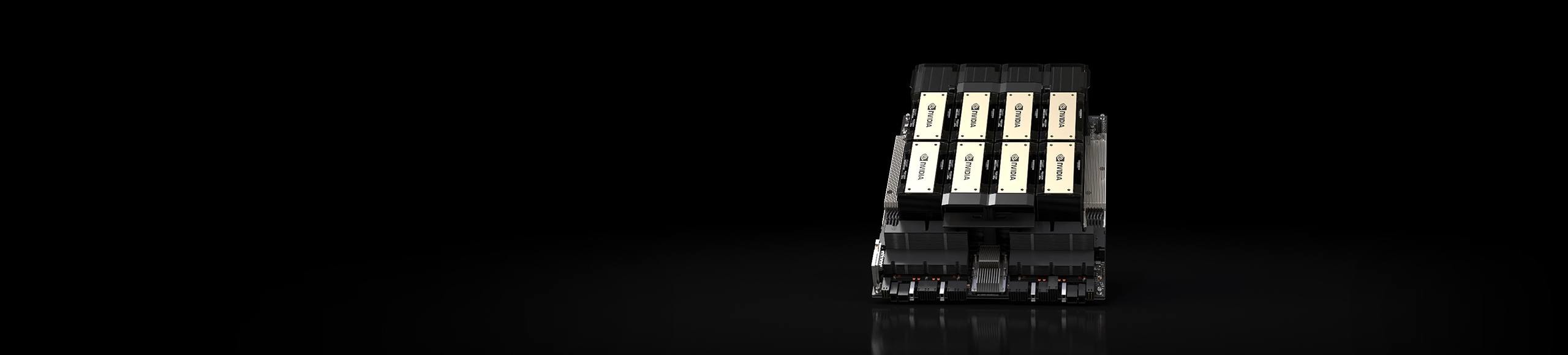

The key distinction between DGX and HGX is procurement path, not performance. A DGX server is a NVIDIA-branded, NVIDIA-shipped system. An HGX server uses the same GPU baseboard but is assembled and sold by an OEM. Enterprise IT organizations that already have a Dell or HPE relationship often land on HGX for that reason alone — same compute, different box on the invoice.

DGX SuperPOD is a different category entirely. It's a complete validated reference architecture for AI supercomputing — NVIDIA designs the rack layout, certifies the networking, validates the storage configuration, and provides software support for the full stack. Buying a SuperPOD is less like buying hardware and more like buying an AI infrastructure program. The accounts that go this route are committing to a multi-year AI infrastructure buildout, not a one-time purchase.

The Networking Layer

At the scale of modern AI clusters, the GPU interconnect fabric is as important as the GPUs themselves. NVIDIA controls this layer too, through two product families: Quantum InfiniBand and Spectrum-X Ethernet. Both target the same problem — when you have hundreds or thousands of GPUs working together on a single training run, any bottleneck in how they communicate with each other caps your effective compute utilization.

Quantum InfiniBand has been the traditional choice for tightly coupled HPC and AI training workloads. It provides extremely low latency and is purpose-built for the all-to-all communication patterns that large model training requires. The current generation, Quantum-2 InfiniBand, supports 400 Gb/s per port — fast enough that model parallelism across hundreds of nodes becomes practical rather than prohibitive.

Spectrum-X is newer and designed specifically for AI workloads over Ethernet. The pitch is that Ethernet is the networking standard the rest of enterprise IT already runs on — routing, security, operations tooling — and Spectrum-X delivers InfiniBand-class performance without requiring a separate network fabric. For enterprise accounts that want to integrate AI compute with their existing networking infrastructure rather than run a parallel InfiniBand island, this is the path NVIDIA offers.

The Spectrum-4 switch at the core of the platform supports 400 Gb/s Ethernet ports with an aggregate switching capacity of 51.2 Tb/s. At that throughput, a 512-GPU cluster can communicate with effectively zero network-imposed bottlenecks during training. That matters because the single most common cause of GPU utilization falling below 60% in early enterprise clusters is network underprovisioning — the GPUs are waiting for weights and gradients more than they're computing.

What This Means If You're Selling Into AI Budgets

Here's the practical extraction for enterprise sellers. You don't need to know the FLOPS rating of every GPU. You need to be able to read the signals that the hardware tier gives you about where an account is, what they've committed to, and where they're going.

- GPU allocation is a budget signal, not just a tech decision. When a CIO approves a 64-node H100 cluster, they've already won an internal fight for AI infrastructure funding. That same account now has a team to justify the investment. They need software — workflow orchestration, observability, data pipelines, integration with the SaaS tools the rest of the company uses. That's your entry point. The hardware budget unlocked the software conversation.

- Accounts buying SuperPODs are in multi-year buildouts. A DGX SuperPOD commitment isn't a one-time hardware purchase — it's a declaration that AI is a core business function. These accounts are building dedicated ML engineering teams, governance frameworks, and internal tooling. The full software and services spending that follows a SuperPOD decision often dwarfs the hardware cost over a three-year horizon. If a prospect mentions SuperPOD evaluation, you are talking to an account in the middle of their AI infrastructure decade.

- The GPU generation tells you where they are in the journey. An account running A100s is managing a workload that was built 2–3 years ago. They're likely in maintenance mode on that infrastructure, possibly looking at whether to migrate to Hopper or wait for Blackwell. An account actively evaluating GB200 is planning 2–3 years out — they're in design and architecture mode, which means all the software decisions around that cluster are open. An account on H100 in production is in the scale-and-optimize phase. Each tier suggests a different conversation and a different set of adjacent needs.

- The DGX ecosystem creates vendor dependency — and adjacency. When a company standardizes on DGX SuperPOD with NVIDIA AI Enterprise software, they've bought into an ecosystem that connects through certified integrations to specific platforms. NVIDIA maintains a partner ecosystem of ISVs whose software is validated on DGX infrastructure. Enterprise platforms that have that certification get preferential access to accounts standardizing on DGX. For Freshworks or any enterprise SaaS with an AI integration story, understanding whether your platform is part of the NVIDIA partner ecosystem — or could be — is a legitimate strategic question. Accounts that have committed to NVIDIA's full stack want their software vendors inside that ecosystem.

If your champion mentions "moving to Blackwell," their AI buildout just went enterprise-scale. That's not an IT upgrade conversation — it's a transformation program. The software decisions around that cluster are being made right now. Be in the room.

Why This Makes You a Better Seller, Not Just a Better Engineer

I've spent time learning NVIDIA's stack not because I'm trying to become a data center architect, but because I've watched deals stall when sellers can't speak to the infrastructure context the CIO is living in. When your champion says their team is "evaluating Blackwell for the next cluster refresh" and you know what that means — the timeline, the budget cycle, the complexity of that decision, the software gaps it creates — you can have a materially different conversation than the seller who nods and changes the subject.

Enterprise AI is still in the infrastructure investment phase. The GPU layer is where the serious budget is moving first. The software and services layer follows. Sellers who understand the hardware map are better positioned to time that follow-on conversation correctly — not too early, when the infrastructure isn't in place, and not too late, when the decisions have already been made. The field guide is the advantage.